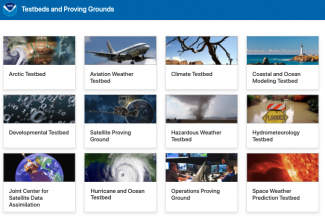

NOAA’s testbeds and proving grounds (NOAA TBPG) are an important link between research advances and applications, and especially NOAA operations. Some are long-recognized, like the Developmental Testbed Center (DTC), while others have been chartered more recently. With the 2015 launch of the Arctic Testbed in Alaska, twelve NOAA TBPG follow execution and governance guidelines to be formally recognized by NOAA. These facilities foster and host competitively-selected, collabora- tive transition testing projects to meet NOAA mission needs. Projects are supported through dedicated or in-kind facility sup- port, and programmatic resources both internal and external to NOAA. Charters and additional information on NOAA TBPG, as well as summaries of recent coordination activities and workshops, are posted at the web portal. See www.testbeds.noaa. gov.

NOAA’s testbeds and proving grounds (NOAA TBPG) are an important link between research advances and applications,and especially NOAA operations. Some are long-recognized, like the Developmental Testbed Center (DTC), while others have been chartered more recently. With the 2015 launch of the Arctic Testbed in Alaska, twelve NOAA TBPG follow execution and governance guidelines to be formally recognized by NOAA. These facilities foster and host competitively-selected, collabora- tive transition testing projects to meet NOAA mission needs. Projects are supported through dedicated or in-kind facility sup- port, and programmatic resources both internal and external to NOAA. Charters and additional information on NOAA TBPG, as well as summaries of recent coordination activities and workshops, are posted at the web portal. See www.testbeds.noaa. gov.

Along with adopting systematic guidelines for function, execution, and governance of NOAA TBPG, in 2011 NOAA instituted formal coordination among the TBPG, to better leverage progress across the spectrum of testing, and provide a consistent voice and advocacy for programs and practices involving the TBPG. The coordination committee hosts annual workshopsfeaturing collaborative testing on high-value mission needs, fosters practices consistent with rigorous, transparent testing and increased communication of test results, and provides a forum to advance program initiatives in transitions of research to operations and of operations to research.

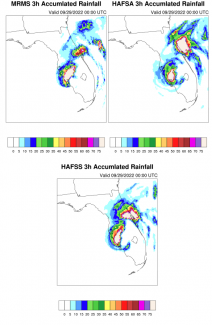

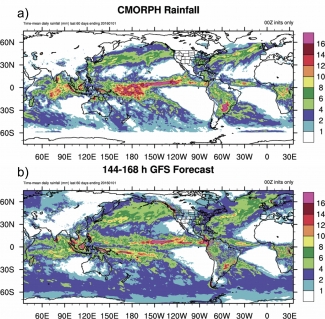

NOAA’s TBPG conducts transition testing to demonstrate the degree of readiness of advanced research capabilities for operations/applications. Over the past two years, these facilities completed more than 200 transition tests, demonstrating readiness for NOAA operations for more than 70 candidate capabilities. More than half have already been deployed. Beyond the simple transition statistics, NOAA TBPG have generated a wealth of progress in developing science capabilities for use by NOAA and its partners through more engaged partnerships among researchers, developers, operational scientists and end- user communities. Incorporating appropriate operational systems and practices in development and testing is a key factor in speeding the integration of new capabilities into service and operations.

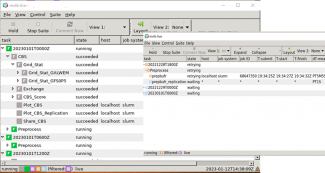

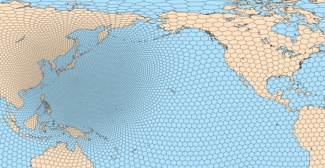

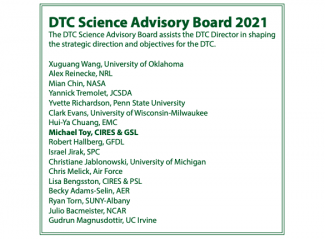

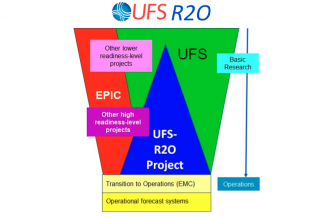

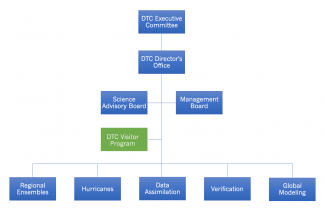

DTC, in collaboration with public and private-sector partners, plays an increasingly important role in NOAA transitions of advanced environmental modeling capabilities to operations, and with rigorous testing to evaluate performance and potential readiness for NOAA operations. Readiness criteria include capability-specific metrics for objective and subjective performance, utility, reliability and software engineering/production protocols. DTC facilitates R&D partners’ use of NOAA’s current and developmental community modeling codes in related research, leading to additional evaluation and incorpora- tion of partner-generated innovations in NOAA’s operational models.

NOAA programs that have recently supported projects conducted at NOAA TBPG, and especially at DTC, include the Next Generation Global Prediction System (NGGPS), Collaborative Science and Technology Applied Research Program, Climate Program Office, the US Weather Research Program, and the Hurricane Forecast Improvement Program. Under NGGPS auspic- es, the DTC added a new unit for testing prototypes for the NOAA’s next global prediction system. DTC’s contributions to the success of NGGPS will be the foundation for improved forecasts in critical mission areas such as high-impact severe/extreme weather in the 0-3 day time frame, in the 6-10 day time frame, and for weeks 3-4. As chair of NOAA’s TBPG coordinating committee, I am excited about the tremendous opportunity and capability that the DTC brings to these efforts to enhance NOAA’s science-based services.