Despite significant improvement of model development and computational resources, model forecast issues still exist in numerical weather prediction (NWP) and Earth system models (ESMs), including the Unified Forecast System (UFS). Hierarchical System Development (HSD) (Ek et al. 2019) is an efficient approach for model development, enabling the community with multiple entry points for research efforts, spanning simple processes to complex systems. It accelerates research-to-operations-to-research (R2O2R) by facilitating interactions between a broad scientific community and the operational community.

Lead Story

A long-term vision of Hierarchical System Development for UFS

The Developmental Testbed Center (DTC), in collaboration with the Earth Prediction Innovation Center (EPIC), prepared a white paper describing a long-term vision of HSD for the UFS and a plan for its phased implementation. This paper outlines proposed hierarchical axes relevant to the UFS, HSD capabilities that currently exist in the UFS, and recommendations for future development by EPIC aligned with each axis. A survey on HSD for UFS was distributed to a broad research and operational community to collect valuable insight and feedback on participants' experience with, and use of, HSD tools, testing infrastructure, current and future capability needs, and perceived gaps to help inform the future HSD within the UFS. The results were used to inform the white paper and prioritize the proposed recommendations.

Several unique perspectives exist for this topic, where hierarchical systems have been defined by axes such as model complexity (Bony et al. 2013), model configuration (Jeevanjee et al. 2017), or principles of large-scale circulations (Maher et al. 2019). Building upon these perspectives, the white paper introduces four main axes that can be applied to the UFS framework. The first discusses sample size, where the common approach during the model issue-identification stage is to start from one case study and expand to multiple similar cases to determine any systematic biases. The second is hierarchy of scales, spanning coarse-to-fine grid spacing and global-to-regional scales, including dynamic downscaling techniques such as nesting and variable resolution modeling. The third is simulation realism, which refers to the simplification of models to help improve theoretical understanding of atmospheric processes and interactions. The fourth is mechanism/interaction denial, where the model can be configured such that any given mechanism(s) can be turned off in place of data models to examine the impact of specific mechanisms on atmospheric or other model component processes.

Key recommendations, as well as level of effort and necessity within each of the axes, were then prioritized to help inform the future progress of the UFS HSD (Fig. 1). To address sample size, a compilation of case studies that represent model issues is recommended to be developed and maintained to help facilitate development and improvement of modeling systems. These cases should span from single cases to multiple similar cases, and from short-term runs to long-term runs. The priority for hierarchy of scales is to include the current capabilities of nesting in publicly released applications and to establish sub-3-km capabilities. Mechanism/interaction denial recommendations include continued enhancements to the Common Community Physics Package Single Column Model (CCPP-SCM), capabilities for removing feedback in a coupled model system, such as CDEPS, and continued development of data assimilation. A highly configurable framework for idealized simulations is desired for simulation realism.

The vision and ongoing development for the HSD testing framework by the EPIC team, in collaboration with the UFS community, is to accelerate the research and development capabilities of the UFS and to facilitate the day-to-day development work for the UFS. This will increase the readiness of the UFS and its applications for releases and deployments.

References

Bony, S., and Coauthors, 2013: Monograph on Climate Science for Serving Society: Research, Modelling and Prediction Priorities.

Ek, M., and coauthors, cited 2023: Hierarchical System Development for the UFS. [Available online at https://ufscommunity.org/articles/hierarchical-system-development-for-the-ufs/.]

Jeevanjee, N., P. Hassanzadeh, S. Hill, and A. Sheshadri, 2017: A perspective on climate model hierarchies. Journal of Advances in Modeling Earth Systems, 9, 1760-1771, https://doi.org/10.1002/2017MS001038.

Maher, P., and Coauthors, 2019: Model Hierarchies for Understanding Atmospheric Circulation. Reviews of Geophysics, 57, 250-280, https://doi.org/10.1029/2018RG000607.

Director's Corner

Israel Jirak

DTC plays an important role in model development and evaluation for NOAA and the broader Unified Forecast System (UFS) community. My first interaction with DTC was back in 2010 in the Hazardous Weather Testbed (HWT), when the Method for Object-Based Diagnostic Evaluation (MODE) was being tested for convective applications. MODE is part of DTC’s Model Evaluation Tools (MET) and serves as an innovative alternative to traditional grid-based metrics for model evaluation. MODE offers a unique perspective on evaluating different object attributes (e.g., size, shape, orientation, etc.) and offers the flexibility to match forecast objects to observed objects, which is important for convection-allowing model (CAM) simulations where “close” forecasts (as defined by the user) can be counted as a hit. Another notable DTC project on model evaluation in the HWT was the development of the initial scorecard for CAMs. This project explored the creation of a short list of the most relevant weather parameters used for evaluating CAMs that should be included in a scorecard when comparing model performance (e.g., prior to operational implementation).

DTC also contributes to model development activities for NOAA and the broader community. For example, DTC has made significant contributions to the Common Community Physics Package (CCPP) to ensure that the latest physics parameterization schemes are made available to the UFS community. Staff at DTC have also tested and implemented stochastic physics schemes in legacy modeling systems that enabled these options to be ported into the UFS framework, which is an important step to increasing forecast diversity in a single-model, single-physics ensemble design. Another ongoing project at DTC involves the exploration of initial-condition uncertainty in a CAM ensemble to improve probabilistic forecasts from next-generation UFS systems, like the Rapid Refresh Forecast System (RRFS).

After serving on the DTC Science Advisory Board for the past three years, I see that DTC is well positioned and poised to continue expanding its role in contributing to model evaluation and development activities in NOAA and the UFS community. My experience with the operational side of NOAA suggests that DTC has tremendous potential in assisting with model development, testing, and evaluation of the next-generation operational forecast systems. In my opinion, DTC can expand its role in the research-to-operations space by actively engaging and participating in real-time evaluation activities at other NOAA testbeds, whether that be contributing a model run with physics upgrades or generating verification statistics for next-day evaluation.

Who's Who

Weiwei Li

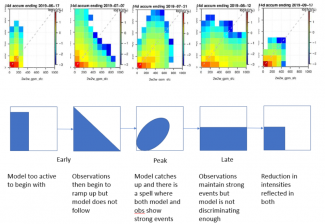

Weiwei Li joined the Research Applications Laboratory at NCAR in 2018. She leads and contributes to multiple projects focused on assessing model physics performance to inform their improvements in a modeling/forecasting system. She is currently working on testing and evaluation of the physical parameterizations, involving two major modeling systems, i.e., the Unified Forecast System (UFS) and the Model for Prediction Across Scales (MPAS). Her team uses partially idealized simulations to isolate problematic areas in a given model, particularly the physics component, where most physical processes are parameterized. She compares simulated physical processes against reliable benchmarks, such as observations, to inform areas for improvement.

This is a good fit for Weiwei as she is a born investigator. She identified as an introvert as early as she can remember. She was fascinated by science books and mysteries. This fascination formed the basis for her love of all forms of investigations and challenges because they feed her passion for problem solving. Her original plan for her college study was either becoming a doctor or an archeologist. But somehow, she accidentally enrolled in the department of atmospheric sciences, not even realizing she actually submitted this in her college application. However, she learned to enjoy what she was studying. Because this field is very unique, she became fascinated by something entirely new instead of a subject she had preconceived notions about.

When asked how she describes her work to others, Weiwei explains that weather and climate models continue to suffer from systematic biases, many of which are related to missing or poorly represented physical processes and deficiencies in the underlying model physics. Process-based testing and evaluation of physics are essential for identifying and understanding the causes of model errors and biases, and to improve the overall performance of a weather/climate model, while ensuring that model adjustments or tunings can be justified on physical grounds. So, it is critical to conduct testing and evaluation of model physics components, their interactions, and the impact on the overall performance of a model, and using process-based testing and evaluation to advance understanding of physical processes.

The challenge of her work is also the most rewarding. Success requires deep teamwork, collaborations, and close coordination, all at a very fast pace. Another big challenge is knowing that one has to understand almost every aspect of a given model to distill and interpret information out of complicated processes in our Earth system to provide an objective evaluation of a modeling system.

When she isn’t assessing model performance, she’s juggling her work/life balance. Her daughter is entering kindergarten, and she tries to squeeze in some lap swimming. She’s a classical pianist, who’s also harboring a wish to play more jazz and occasionally plays duets with her daughter. She’s an avid reader of books about animals, culture, critical writing, traveling, and mysteries, of course. In addition to swimming, she loves yoga and barre (as she was a ballet student as a child). As fall approaches, she has a head full of home improvement and interior design ideas ready to go.

Bridges to Operations

Informing and Optimizing Ensemble Design in the RRFS

The transition from current operational convection-allowing models (CAMs) to the Rapid-Refresh Forecast System (RRFS) involves a significant paradigm shift in CAM ensemble forecasting for the US research and operational communities. In particular, implementing a single-dycore and single-physics RRFS will allow a number of deterministic modeling systems to be retired, as well as the multi-dycore and multi-physics High-Resolution Ensemble Forecast (HREF) system. Such a change brings up a number of ensemble design questions that need to be addressed to create an RRFS ensemble that effectively addresses concerns about sufficient spread and forecast accuracy. Single-dycore and single-physics CAM ensembles are known to lack sufficient spread to account for all potential forecast solutions; therefore, a concerted research effort is necessary to evaluate potential options to address these concerns.

To this end, the DTC has been conducting two RRFS ensemble design assessments using both previous real-time data and retrospective, cold-start simulations in an operationally similar configuration. The first evaluation assessed time-lagging for the RRFS, with quantitative comparisons conducted between two RRFS-based ensembles run in real-time for the 2021 Hazardous Weather Testbed Spring Forecasting Experiment (HWT-SFE).

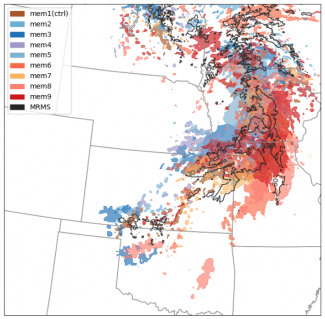

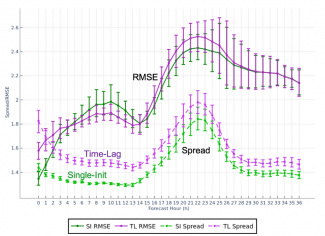

A nine-member time-lagged ensemble was created for a period of 13 days during the 2021 HWT-SFE by combining five members initialized at 00Z plus four members from the previous 12Z initializations. While the time-lagged members contain longer lead times, they still provide valuable predictive information, and so the 5+4 constructed time-lagged (TL) ensemble’s skill was compared with the full single-init (SI) ensemble, where all nine members came from the initialization at 00Z.

While the primary goal of a time-lagged ensemble is to derive additional predictive information from older initializations without the added cost of numerical integration, the results revealed that a set including TL members can contribute additional spread when compared to an SI ensemble of the same size, with little to no degradation in skill. This result was observed in most variables, atmospheric levels, and ensemble metrics, including evaluations of spread and accuracy, reliability, Brier score, and more. Almost all metrics exhibited a matched (and occasionally improved) level of skill and reliability between the TL and SI experiments, while many variables showed a statistically significant improvement to spread.

In preparation for the first implementation of the RRFS, results were communicated to model developers and management at NOAA/GSL and NOAA/EMC, the principle laboratories working on the system. Due to computational constraints, and informed by our findings, a decision was made to pursue the use of time-lagging as part of the ensemble design of RRFSv1.

A second evaluation will explore the impact of initial condition (IC) uncertainty and stochastic physics in retrospective cold-start simulations. Employing initial condition perturbations from GEFS forecasts, these experiments are run with and without stochastic physics to isolate impacts of/investigate potential optimization for both uncertainty representation methods and their evolution as a function of forecast lead time. Given these results, in combination with the time-lagging experiments, we hope to help address the pressing need for operational systems to produce an adequate sample of potential outcomes while balancing the constraints of computational cost.

Visitors

Developing a METplus use case for the Grid-Diag tool

Marion Mittermaier’s visitor project focused on developing a METplus use case for the Grid-Diag tool, which creates histograms for an arbitrary collection of data fields and levels. Marion used the Grid-Diag tool to investigate the relationship between the forecasted and observed precipitation accumulations for GloSea5, the UK Met Office ensemble seasonal prediction system and how the relationship evolved over time. The project is a sub-task of a larger body of work that uses several METplus capabilities with the objective of exploring the predictability of dynamical precursors of flooding in the Kerala region of India, a region that experienced severe flooding in successive monsoon seasons between 2018 and 2020 (see Figure).

Marion was surprised to find the level of agreement between the forecast and observations was very high, even without any form of hindcast adjustment. The Grid-Diag tool has shown that it can provide very useful and swift analysis of the associations between variables.

Often, other opportunities for collaboration present themselves on visits, and sometimes simple in-person communication can lead to a greater understanding of the models and/or software and tools developed at the DTC, which was definitely the case during Marion’s visit in August 2022.

METplus’s lead software engineer, John Halley Gotway “really enjoyed brainstorming novel applications of the TC-Diag tool with Marion, and doing so face-to-face through the DTC visitor program made for a very fruitful collaboration! Getting direct and detailed feedback about METplus from engaged scientists really helps inform future directions.”

Marion’s visit also provided her with an opportunity to learn about and explore a relatively new METplus tool, Multi-variate MODE (Method for Object-based Diagnostic Evaluation), which provides the capability to define objects based on multiple fields. Marion commented that “I’ve now successfully processed 6 months’ worth of forecasts using the complex use case. The analyzing process commences now, but it’s pretty cool to have come this far. Mountains of output have been generated. I wouldn’t have been able to do this without the time [Tracy] offered to walk me through it and I want to thank [Tracy] again. I can’t begin to say how valuable the visit last summer was. The time I spent with [Tracy], even though it was only an hour or two, was some of the “gold dust” that one hopes to find on these trips. It might not have been at the very top of my list of objectives for the trip but it has certainly contributed to making it the most productive trip in terms of “figuring stuff out” that I’ve learned. It’s proof that the visitor program does work but it wouldn’t work without all the people who are willing to give up their time to speak to us. That session made a very significant “penny drop” in my brain about METplus in a lot of ways. It’s like something clicked.”

The benefits from these visits go both ways. Tracy Hertneky, one of DTC’s scientists commented, “It was lovely to meet and work with Marion, even in the brief time spent guiding her through METplus and in particular, the complex and relatively new Mulit-variate MODE use case, which ingests 2+ fields to identify complex ‘super’ objects. I was happy to share my knowledge and expertise with her as I feel that these connections are one of the most valuable aspects of our work. As the multivariate MODE tool is enhanced, Marion and her colleagues may even be able to test the tool and provide valuable outside feedback on its usage.”

Community Connections

METplus Training Expanded

The METplus team launched part one of the METplus Advanced Training Series this past spring, which focused on Prototypes in the Cloud, Subseasonal to Seasonal (S2S) Diagnostics, and Coupled Model Components. The intent of the METplus Advanced Training Series was to extend beyond the Basic Training Series, held during the winter and spring of 2021-2022. The series will resume with Part Two on Wednesday, October 4th, 2023, and will focus on evaluating Fire Weather forecasts and the Seasonal Forecast System (SFS). It will also cover Python Embedding, Ensembles, and Use of Climatologies. The five remaining sessions will run virtually on Wednesday mornings every other week beginning at 9:00 am MST for two hours. Those interested in participating can visit the Registration page to sign up for the fall sessions.

In a related activity, the METplus team has been working on significant improvements to the Online Tutorial and connections to the Users’ Guides. A focus group that convened during Spring 2023 provided many great suggestions for how to make the METplus Support and Training more effective. Over the summer, John Opatz, Brianne Nelson, and Barbara Brown worked tirelessly to execute the most impactful suggestions. They are currently conducting a second focus group to determine whether their effort resulted in marked improvements. Please watch for a roll-out of our updated online training and documentation in the fall.

Did you know?

Internship Opportunity

The Lapenta internship invites undergraduate (sophomore and junior) as well as graduate students from all STEM disciplines (marine science, atmospheric science, engineering, social science, applied math, etc) to participate in 10-week (early June to mid August) projects that span all five line offices and the Office of Marine and Aviation Operations. These projects are high-impact, short-term research-to-operations (R2O) efforts where students learn coding and networking side-by-side with many government employees. The application period starts on October 1, 2023 and closes on January 3, 2024. FAQ sessions will be held on September 27, October 16, November 3, and December 18. Interns will be chosen by late February and, depending on the project/mentor and intern needs, projects will be undertaken virtually or in person at locations such as College Park, MD, Miami, FL, Boulder, CO and Seattle, WA. Completion of this internship will qualify each student for direct-hire by NOAA, along with valuable experience preparing them for future jobs in the private, academic, and public sectors. Visit the Lapenta program website and application site to learn more.

Copyright © 2026. All rights reserved.