NCAR and NOAA are each adopting a unified approach to coupled environmental modeling, where success for both efforts is critically dependent on community contributions. At the end of January 2019, NCAR and NOAA signed a Memorandum of Agreement (MOA) to develop a shared infrastructure that encourages the broader community to engage in improving the Nation’s weather and climate modeling capabilities. Collaborating on the development of a common infrastructure will reduce duplication of effort and create common community code repositories through which future research advances can more easily benefit the operational community. NOAA will also be able to leverage NCAR experience to provide community access and support for NOAA’s operational models and tools. The MOA focuses on seven key elements of common infrastructure for the NOAA Unified Forecast System (UFS) and the NCAR Unified Community Model (UCM).

Coupling between Components - NCAR and NOAA have already developed an initial design for a new framework for coupling component models. This common mediator framework will ultimately accommodate evolving model coupling strategies, facilitating community research contributions and accelerating the transition of research into operations.

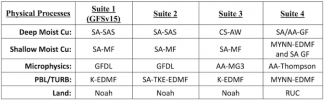

Coupling within a Component - A flexible framework for a common interface that allows interoperability / integration of physics packages within component models offers many near term and longer term benefits. NCAR and NOAA are initially focusing on implementing such an interface for the community atmospheric models. The Common Community Physics Package (CCPP) developed by the DTC’s Global Model Test Bed (GMTB) through NGGPS funding, which is being implemented in NOAA’s atmospheric models, has paved the way for a collaborative approach with NCAR’s Community Physics Framework (CPF).