The “Building a Weather-Ready Nation by Transitioning Academic Research to NOAA Operations” Workshop was held at the NOAA Center for Weather and Climate Prediction in College Park, Maryland, on November 1-2, 2017. NOAA and UCAR organized the meeting that drew more than one hundred participants from universities, government laboratories, operational centers, and the private sector. Members of the organizing committee included Reza Khanbilvardi, City College of New York, Chandra Kondragunta, NOAA/OAR, Jennifer Mahoney, NOAA/ESRL, Fred Toepfer, NOAA/NWS, and Hendrik Tolman, NOAA/NWS. John Cortinas, NOAA/OAR, and Bill Kuo, UCAR, served as Co-Chairs. A draft of the Workshop Report is available here.

Lead Story

“Building a Weather-Ready Nation by Transitioning Academic Research to NOAA Operations” Workshop

The workshop was designed to inform the academic community about NOAA’s transition policies and processes and to encourage the academic community to actively participate in transitioning research to improve NOAA’s weather operations. It was also an opportunity to strengthen engagement between the research and operational communities.

The first day of the workshop consisted of a series of invited presentations on the policies, needs, requirements, gaps, successes, and challenges of NOAA transitions. During the second day, working groups discussed issues and made recommendations to improve the process of transitioning Research to Operations (R2O). In particular, the discussions led to several interesting suggestions on the participation of academic community in the NOAA R2O activities:

-

NOAA needs to recognize the academic community has a different rewards system from that of an operational organization. Scientific publication is critical for the career advancement of university professors and students. Therefore, the academic community will be much more interested in research that can lead to publication.The availability of computing resources is critical for successful R2O in weather and climate modeling. Given the limited NOAA computing resources and the challenges of obtaining security clearance to use NOAA computing facilities, an alternative solution is needed. Making NOAA operational models and data, and computing resources available through the cloud is an attractive solution.

-

The academic community cannot work for free. Therefore, appropriate funding to support their participation in R2O is critical. Good examples include the Hurricane Forecast Improvement Project (HFIP), Next Generation Global Prediction System (NGGPS), and Joint Technology Transfer Initiative (JTTI) announcement of opportunities.

Through NOAA support, several students were invited to participate in the workshop. One Ph.D. student from the University of Maryland shared that her eyes were opened to real-life challenges and issues that are confronting our field, something she was not able to learn from her classes. Interacting with these enthusiastic next-generation scientists, who are not afraid to tackle the challenging problems in our field, was the most rewarding part of the workshop.

Many participants commented that the workshop was informative, stimulating and productive, as it allowed the academic community to have a direct dialogue with NOAA on research to operations transition issues. The participants agreed that it would be desirable to have such a workshop once every two years.

Director's Corner

DTC Plays an Increased Role in Assisting R2O

As chief of the Model Development Branch (MDB) at NOAA’s Global System Division (GSD), I am honored to work with many of the scientists from the DTC. The DTC has been a vital partner for the development, testing, and evaluation of the Rapid Refresh (RAP) and High-Resolution Rapid Refresh (HRRR) models, and I look forward to continuing this valuable collaboration as the community works together to define and develop the next-generation modeling system. The work performed in my branch at GSD is strongly linked to the five task areas that the DTC supports: Regional Ensembles, Hurricanes, Data Assimilation, Verification and the Global Model Test Bed (GMTB). Each of these areas will also be an important component of the Next Generation Global Prediction System (NGGPS), currently under development.

The goals of the NWS NGGPS include not only to generate a much-advanced global modeling system with state-of-the-art non-hydrostatic dynamics, physics and data assimilation, but also to foster much broader involvement of other labs and the academic community. This opens an opportunity for a new role for the DTC. While the initial objective for NGGPS was the selection of a dynamic core, other tasks are now of high priority. Among those, the selection of the advanced physics parameterizations and the involvement of the community may be the most challenging. The selection of an advanced physics suite should be completed by the end of 2018, to allow rigorous testing and tuning to be performed with the complete package. It is recognized that all of the physics schemes will likely need further testing, development, and calibration prior to, during, and after implementation. The DTC is ideally positioned to contribute to this task.

Model physics describe the grid-scale changes in forecast variables due to sub-grid scale diabatic processes, as well as resolved-scale physical processes. Physical parameterization development has been a critical driver of increased forecast accuracy of global and regional models, as more processes are accounted for with sophistication appropriate for the model resolution and vertical domain. Key atmospheric processes that are parameterized in current global models include subgrid turbulent mixing in and above the boundary layer, cloud microphysics and ‘macrophysics’ (subgrid cloud variability), cumulus convection, radiative heating, and gravity wave drag. Parameterizations of surface heat, moisture, and momentum fluxes over both ocean and land, subgrid mixing within the ocean due to top and bottom boundary layers, gravity waves and unresolved eddies, land surface and sea ice properties are also important on weather and seasonal time scales.

A NGGPS modeling workshop and meetings of the Strategic Implementation Plan (SIP) Working Groups were held in August of 2017 at the National Center for Weather and Climate Prediction in College Park, Maryland, to finalize the SIP document that describes projects for the development of the Unified Forecast System (Link to SIP document). The future of physics parameterization development, as described in this report, listed many of the current DTC tasks. A possible DTC priority could be to test (at multiple resolutions), evaluate, and maybe even assist in tuning scale-aware and stochastic parameterizations for processes such as microphysics, cumulus convection and gravity wave drag.

To achieve the goal of involving the broader community in the development, testing, and assessment of physical parameterizations, the GMTB was established as a new task area in the DTC. The GMTB, led by Ligia Bernadet and Grant Firl, is an ambitious project to make model development much more user-friendly, catalyzing partnerships between EMC and research groups in national laboratories and academic institutions. The GMTB aims to implement transparent and community-oriented approaches to software engineering, metrics, documentation, code access, and model testing and evaluation.

The initial charge to GMTB was the development and community support of the Common Community Physics Package, a software and governance framework to facilitate Research to Operation (R2O) transitions of community contributions. Additionally, the GMTB is tasked with defining a hierarchy of tests (model configuration, initial conditions, etc.), iteratively exercising each candidate physics configuration over the tests, and providing assessments in an open manner – tasks which are also needed for the development of unified metrics. GMTB already was of great help to implement the Grell-Freitas convective parameterization into the GFS physics package, now running in versions of FV3 and GFS.

The second essential project defined by the SIP physics working group is the design of unified metrics (or at least standardized metrics, dependent on application). The development of the Model Evaluation Tools (MET) is another component of DTC work. MET is designed to be a highly-configurable, state-of-the-art suite of verification tools. The development of the Model Evaluation Tools (MET), which is designed to be a highly-configurable, state-of-the-art suite of verification tools, is another component of DTC work. Current MET development efforts are focused on expanding its capabilities to capture the full range of EMC’s multiple verification packages.

With the existing task areas spanning ensemble work, stochastics, verification software development, community support, data assimilation, and GMTB, DTC will be able to play an increased role in assisting R2O transfer with respect to development and improvements in physical parameterizations. Once the advanced physics suite is selected, this will require gaining expertise in the different parameterizations that are chosen. DTC can conduct carefully controlled, rigorous testing, including the generation of objective verification statistics, and provide the operational community with an increased amount of guidance for selecting new NWP technologies with potential value for operational implementation.

Who's Who

Grant Firl

The long and winding path to becoming an atmospheric scientist began at a young age for Grant, like many atmospheric scientists. He split his childhood years between watching occasional storms develop over the Sandia Mountains of Albuquerque, NM and the frequent passing of MCSs (Mesoscale Convective Systems) and derechos over the forested hills of southwest Missouri.

Grant credits his interest in science and math to a string of inspirational teachers from elementary school and onward, but his focus on meteorology was born of a shared interest in the discipline with his dad who obtained a B.S. in meteorology in the late 1970s. In college, his interests shifted from severe weather to atmospheric modeling and he majored in mathematics and minored in computer science in preparation for graduate studies. He obtained his M.S. and Ph.D. in atmospheric science under David Randall's tutelage at Colorado State University, primarily developing parameterizations for the convective planetary boundary layer using advanced techniques for predicting subgrid-scale variability.

After a postdoc appointment for studying machine-learning algorithms for improving planetary boundary layer parameterization methods, Grant was hired as a Project Scientist I at NCAR to work on the Global Model Test Bed (GMTB) project in the fall of 2015. He now serves as the Node Activity Coordinator for this project. For the past two years, Grant has worked with the GMTB team to design and implement a Common Community Physics Package and an Interoperable Physics Driver. The team is also building a testing platform to experiment with promising physical parameterization advances and to provide this shared capability to the broader community. Grant has thoroughly enjoyed his time working in the DTC and claims that his favorite aspect of the job is the "problem solving" aspect and the opportunity to work with and learn from an array of like-minded, driven, and brilliant people.

Grant married his wife, Julia, in 2009 and has a 5-year old son (Wesley) and 3-year old daughter (Evelyn) who keep him on his toes when he is out of the office. After a brief hiatus from frequent backpacking/hiking trips when his kids were very young, Grant is looking forward to returning to the mountains with regularity and sharing the unique landscape and wildlife with his family. Other hobbies closer to civilization include playing softball with the venerable "Hailraisers" team in Boulder and the "Cyclones" team in Fort Collins and tinkering with various woodworking and automotive repair projects.

Bridges to Operations

DTC MET Verification Tutorial

The DTC Verification team hosted a MET tutorial at NCAR January 31-February 2, 2018 in association with the semi-annual WRF tutorial. This event was the first in-residence MET tutorial since February 2015. There were 31 registered users and several new DTC staff dropped in for pertinent lectures. The tutorial included a half day of lectures on verification basics plus two and a half days of presentations focused on many of the MET tools supplemented with practical sessions that demonstrated the tool. The last day also included training on the METViewer database and display system, used for aggregating, stratifying and plotting statistics, and the newly developed MET+ python wrappers.

METv7.0 was released on March 5th. For more information on MET capabilities, check out the MET Users’ Page: https://dtcenter.org/met/users/

Visitors

Variational lightning data assimilation in GSI

The launch of new observing systems offers tremendous potential to advance the operational weather forecasting enterprise. However, “mission success” is strongly tied to the ability of data assimilation systems to process new observations. One example is making the most of new measurements of lightning activity by the Geostationary Lightning Mapper (GLM) instrument aboard the GOES-16 satellite. The GLM offers the possibility of monitoring lightning activity on a global scale. Even though its resolution is significantly coarser, as compared to ground-based lightning detection networks, its measurements of lightning can be particularly useful in less observed regions such as elevated terrain or open oceans. The GLM identifies changes in an optical scene, which are indicative of the presence of lightning activity, and produces “pictures” that can provide estimates of the frequency, location, and extent of lightning strikes. How can we capitalize on the information provided by these pictures of lightning events for the benefit of operational numerical weather prediction models and in particular at the NOAA/National Weather Service?

We enhanced the NCEP operational Gridpoint Statistical Interpolation (GSI) system within the GLobal Data Assimilation SYstem (GDAS) by adding a new lightning flash rate observation operator and by following a variational data assimilation framework. Given the coarse resolution and simplified microphysics of the current operational global forecasting system, we designed this new lightning flash rate observation operator to update large-scale model fields such as humidity, temp, pressure, and wind.

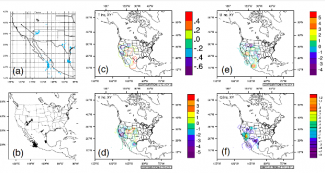

To start, we used surface-based Lightning Detection Network (LDN) data from the World Wide Lightning Location Network as a GLM-proxy (Fig 1a). Real-Earth latitude, longitude, and timing of total lightning strikes were extracted in a way similar to what the GLM instrument measures. These data were then converted into BUFR (a binary data format) required for assimilation by the GSI system and ingested as a cumulative count of geo-located lightning strikes (Fig 1b). This lightning assimilation package has been prepared to handle actual GLM observations once they are well-curated, suitable for testing, and readily available to the public.

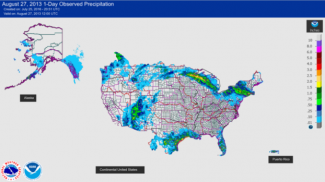

The lightning assimilation package has been fully incorporated in a version of the GSI system and is being evaluated by the GSI review committee. We are now verifying the effects of lightning observations on the forecast through global parallel experiments with the NCEP/4DEnVar system. Thus far, an assessment of the processing of lightning observations and the impacts on the initial conditions for some of the dynamical fields of the GFS model seems promising. The analyses increments of temperature, pressure, humidity, and winds shown in Fig. 1 (c, d, e, and f), and the location of the raw lightning strikes coincide with the location of the high-precipitation contours in Fig. 2.

In preparation for the NOAA/NGGPS FV3-based Unified Modeling System, we hope to further develop this lightning capability for the GOES GLM instrument following a hybrid (EnVar) methodology and by incorporating a cloud-resolving/non-hydrostatic-suitable observation operator for lightning flash rate capable of also updating cloud hydrometeor fields. Once GLM observations are available, we will evaluate their actual impact with GSI system and assess their benefit in operational weather prediction at NCEP.

More information see the Joint Center for Satellite Data Assimilation Quarterly, No. 58, Winter 2018, JCDSA: ftp://ftp.library.noaa.gov/noaa_documents.lib/NESDIS/JCSDA_quarterly/no_58_2018.pdf#page=12

Community Connections

DTC staff host AMS Short Course on Containers

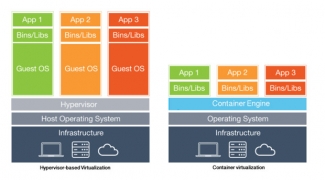

A major hurdle for running new software systems is often building and compiling the necessary code on a particular computer platform. In recent years, the concept of using “containers” has been gaining momentum in the numerical weather prediction (NWP) community. This new container technology allows for the complete software system to be bundled (operating system, libraries, code, etc.) and shipped to users in order to reduce the spin-up time, leading to a more efficient setup process. A core mission of the DTC is to assist the research community in efficiently demonstrating the merits of new model innovations. The development and support of end-to-end NWP containers is in direct support of that mission.

In recent years, a number of NWP software components (including pre-processing, the model itself, post-processing, graphics generation, and statistics computation and visualization) were implemented into Docker containers to better assist the user community. The work conducted by DTC staff leveraged previous efforts of the Big Weather Web (http://bigweatherweb.org), which initially established software containers for the WRF Pre-Processing System (WPS), Weather Research and Forecasting (WRF) model, and NCAR Command Language (NCL) components. From there, DTC staff expanded the containerized tools to include the Unified Post-Processor (UPP), Model Evaluation Tools (MET), and METViewer. Through this complementary work, a full end-to-end NWP system was established, allowing for verification of the model output and visualization of the statistical output.

Several DTC staff (Kate Fossell, John Halley Gotway, and Tara Jensen, and Jamie Wolff) hosted a short course at the 98th Annual AMS meeting in Austin, TX on 6 January 2018 that offered hands-on experience with the established software containers. In preparation for the short course, an online tutorial was created that can be accessed at: https://dtcenter.org/met/docker-nwp/tutorial/container_nwp_tutorial/index.php. If you are an undergraduate/graduate student, university faculty, or researcher who is interested in these new tools, please check it out! Participants of the 2018 short course were complementary of the day-long tutorial, and the DTC plans to offer it again next year at the Annual meeting in Phoenix, AZ – stay tuned for more information regarding future training opportunities.

Did you know?

About Software containers

Porting is the ability to move software from one environment to another by making minimal changes to the existing code. Unfortunately, such an arrangement is not easy to achieve when it comes to porting software from one Operating System (OS) platform to another or even among versions of a single OS. Software containers are a solution to this problem. The software and its working environment are able to be ported from platform to platform, from a laptop to a desktop or HPC environment as a unit, single and complete. The container bundles the software package, configuration files, software libraries and other binaries needed to run the software. Simply put, when you containerize a package and its dependencies, a portable platform is created. The differences in infrastructure and variations in OS releases are basically eliminated.

PROUD Awards

George McCabe is a Software Engineer III for the NSF NCAR Research Applications Laboratory whose skill and commitment have significantly improved the reliability, security, and operational readiness of DTC-supported verification systems. As the lead developer of the METplus Python Wrappers, George plays a central role in delivering a consistent, scalable, and reproducible verification system that is trusted by both research and operational communities.

George’s contributions extend beyond routine software development. He led efforts to resolve complex integration, automation, and cybersecurity challenges keeping DTC-supported verification systems compliant, operationally viable, and on track for delivery within evolving security environments. His work has strengthened METplus as a robust, secure, and mission-ready framework while preserving usability for a broad and expanding user base.

In addition to METplus, George contributes to Air Force Verification and Validation on the Global Synthetic Weather Radar project and the Benchmarking Testing and Evaluation and Verification Framework, advancing scientifically rigorous, reproducible evaluation practices while reducing redundant effort across institutions. He is widely respected for his exceptional ability to translate complex technical and security challenges into practical, sustainable solutions, as well as for his calm, methodical approach to problem solving, particularly during high pressure and high-impact efforts.

George’s initiative, technical leadership, and commitment to excellence consistently go beyond expectations, establishing him as a cornerstone of DTC software development and a profoundly deserving recipient of this recognition.

Copyright © 2026. All rights reserved.