Faculty and graduate students at universities typically conduct basic research to better understand the fundamental workings of their area of interest, which in our field is the atmosphere. Transitioning these findings into practical applications, including operational weather forecasting, is then done by national labs and their cooperative institutes. Yet in many cases, university researchers are working on problems that are directly relevant to operations, and have the potential (with a little help) to be considered for transition into the operational environment. How to cross the many hurdles associated with this transition, however, is not a topic that is well understood in the academic lab setting, where the project may be developed by a faculty member and one or two graduate students.

Director's Corner

Russ S. Schumacher

I've collaborated closely with forecasters and forecast centers in the past, mainly on what might be called "operationally relevant" research -- work that can inform the forecast process but isn't immediately applicable. This summer, I had my first real experience as a faculty member in formally testing a product that could be considered for operational transition. With support from NOAA's Joint Technology Transfer Initiative, we tested my Ph.D. student’s heavy rainfall forecasts at the Flash Flood and Intense Rainfall (FFaIR) experiment at the Weather Prediction Center (WPC) and Hydrometeorology Testbed during June and July of 2017.

Preparing for the experiment raised several issues of a scientific and technical nature, that I was not really accustomed to having to think about in an academic setting. Some were fairly mundane, like "how do we generate files in the proper format for the operational computers to read them?" But others were more conceptual and philosophical: “How should a forecaster use this product in their forecast process? What should the relationship be between the forecast probabilities and observed rainfall/flooding? How can we quantify flooding rainfall in a consistent way to use in evaluating the forecasts?”

So why do I bring all of these experiences up in the “DTC Transitions” newsletter? Because one of the DTC’s key roles is to facilitate these types of research-to-operations activities for the broader community (including universities as well as research labs.) One particular contribution that the DTC makes to this effort is the Model Evaluation Tools (MET), a robust, standardized set of codes that allow for evaluating numerical model forecasts in a variety of ways. For new forecast systems or tools to be accepted into operational use, they should demonstrate superior performance over the existing systems, and the only way to establish this is through thorough evaluation of their forecasts. Careful evaluation can also point to areas for additional research that can lead to further model improvements. The DTC also sponsors a visitor program that supports university faculty and graduate students to work toward operational implementation of their research findings.

Conducting research-to-operations activities in an academic setting will certainly fall outside the comfort zone of many university researchers. Furthermore, we should be sure not to lose our focus on basic research, which is often best suited to academia. But the fruits of that basic research are also often ready to take the next step to direct application and broader use, and I encourage fellow academics to test out taking that step, especially with the support and tools offered by the DTC.

Who's Who

Evan Kalina

Evan knew he wanted to be a meteorologist when he was five years old -- every type of thunderstorm that blew through Kendall, FL enamored him. When Joe Cione, a hurricane researcher moved in across the street, his future career was sealed.

After graduating in 2010 from Florida State University with a B.S. in meteorology, Evan moved to Boulder for graduate school at the University of Colorado, where he analyzed model simulations and radar data from supercell thunderstorms. Cione serendipitously moved to Boulder about the time Evan graduated with his Ph.D. and invited Evan to do a postdoc. In that role he used similar techniques to the ones he used to study supercells and applied them to hurricanes. During his postdoc, he also became interested in writing and managing code efficiently. Joining the DTC has been a great opportunity to use and refine those skills further.

Evan currently works for the NOAA Earth System Research Laboratory Global Systems Division as the Node Activity Coordinator for the DTC Hurricane Task. His primary duty is to coordinate model development activities for the Hurricane Weather Research and Forecast (HWRF) system. He makes sure that model developers have access to the latest version of the HWRF code that runs operationally at NCEP, and that they can work within the HWRF code repository. This coordination helps transition innovations that improve the model forecast into operations. "It's satisfying to be on the cutting edge of numerical weather prediction," says Evan, "and to contribute to an operational system that is essential for protecting lives and property from hazardous weather."

Evan enjoys the physical and mental challenges of the Colorado mountains and spends most of his spare time outdoors. He likes to run, bike ride, hike, and backcountry camp. He is slowly ticking off the Fourteeners – his favorites so far have been Longs, Pikes, and Crestone Needle. About a superpower he wishes he had: "The pack that I take hiking would be a lot lighter if I didn’t have to eat or drink…"

Evan's life highlights include seeing seven tornadoes while storm chasing in southeast Colorado the day he graduated with his Ph.D., flying through Hurricane Matthew on the NOAA P-3, and traveling to Iceland in the spring of 2015.

Bridges to Operations

Evaluation of the new hybrid vertical coordinate in the RAP and HRRR

The terrain-following sigma coordinate has been implemented in many Numerical Weather Prediction (NWP) systems, including the Weather Research and Forecasting (WRF) model, and has been used with success for many years. However, terrain-following coordinates are known to induce small-scale horizontal and vertical accelerations over areas of steep terrain due to the reflection of topography in the model levels. These accelerations introduce error into the model equations and can impact model forecasts, especially as errors are advected downwind of major mountain ranges.

Efforts to mitigate this problem have been proposed, including Klemp’s smoothed, hybrid-coordinate, in which the sigma coordinate is transitioned to a purely isobaric vertical coordinate at a specified level. Initial idealized tests using this new vertical coordinate showed promising results with a considerable reduction in small-scale spurious accelerations.

Based on these preliminary findings, the DTC was tasked to test and evaluate both the hybrid vertical coordinate and the terrain-following sigma coordinate within the RAP and HRRR forecast systems to assess impacts on retrospective cold-start and real-time forecasts.

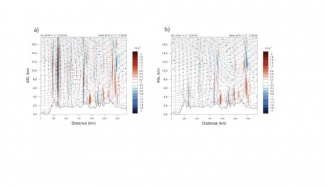

The DTC conducted several controlled cold-start forecasts and one cycled experiment with the 13 km RAP, initialized from the GFS. This sample included days with strong westerly flow across the western CONUS, favoring vertically propagating mountain wave activity. In addition, one cycled, 3-km HRRR experiment was initialized from the non-hybrid coordinate RAP. The only difference between these retrospective runs was the vertical coordinate.

This sample of forecasts indicated the hybrid vertical coordinate produced the largest impact at upper levels, where the differences in coordinate surfaces are most pronounced due to the reflection of terrain over mountainous regions. As a result, wind speeds with the hybrid coordinate were generally increased near jet axes aloft as vertical and horizontal mixing of momentum decreased when compared with the terrain-following coordinate. In addition, the depiction of vertical velocity at upper levels was greatly improved with reduced spurious noise and better correlation of vertical motion to forecast jet-like features. A corresponding improvement was found in upper-level temperature, relative humidity, and wind speed verification when using the hybrid vertical coordinate.

The hybrid vertical coordinate will be implemented in the operational versions of RAPv4 and HRRRv3 in 2018.

This work was a collaborative effort between NOAA GSD, DTC, and NCAR MMM.

Visitors

Are mixed physics helpful in a convection-allowing ensemble?

As a 2017 DTC visitor, William Gallus is using the Community Leveraged Unified Ensemble (CLUE) output from the 2016 NOAA Hazardous Weather Testbed Spring Experiment to study the impact of mixed physics in a convection-allowing ensemble. Two of the 2016 CLUE ensembles were similar in their use of mixed initial and lateral boundary conditions (at the side edges of the model domain), but one of them also added mixed physics, using four different microphysics schemes and three different planetary boundary layer schemes.

Traditionally, ensembles have used mixed initial and lateral boundary conditions. Their perturbations generally resulted in members equally likely to verify; a good quality in ensembles. However, as horizontal grid spacing was refined and the focus of forecasts shifted to convective precipitation, studies suggested that problems with insufficient spread might be alleviated through the use of mixed physics. Although spread often did increase, rules of well-designed ensemble approaches were violated such as biases related to the particular physics schemes, and in some cases members that were more likely to verify than others. Improved approaches for generating mixed initial and lateral boundary conditions for use in high-resolution models now prompt the question – is there any advantage to using mixed physics in the design of an ensemble?

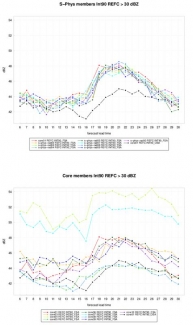

To explore the impact of mixed physics, the Meteorological Evaluation Tools (MET) has been run for 18 members of the two ensembles for 20 cases that occurred in May and early June 2016. Standard point-to-point verification metrics such as Gilbert Skill Score (GSS) and Bias are being evaluated for hourly and 3-hourly precipitation and hourly reflectivity. In addition, Method for Object-Based Diagnostic Evaluation (MODE) attributes are being compared among the nine members of each ensemble.

Preliminary results suggest that more spread is present in the ensemble that used mixed physics, and that the median values of convective system precipitation and reflectivity are closer to the observed values. However, the median values are achieved by having a few members with unreasonably large high biases that are balanced by a larger set of members suffering from systematic low biases. Is such an ensemble the best guidance for forecasters?

Accumulated measures of error from each member would suggest that the ensemble using mixed physics performs more poorly. The figure shows an example of the 90th percentile value of reflectivity among the systems identified by MODE as a function of forecast hour for the nine members examined in both ensembles. Additional work is needed along with communication with forecasters to determine which type of ensemble has the most value for those who interpret the guidance.

MET output and how the ensembles depicted convective initiation are also being examined, along with an enhanced focus on systematic biases present in different microphysical schemes. It is hoped that the results of this project will influence the design of the 2018 CLUE ensemble and that future operational ensembles used to predict thunderstorms and severe weather can be tailored in the best way possible. This visit has been an especially nice one for Dr. Gallus since he had done several DTC visits about ten years ago when the program was new, so the experience feels a little like “coming home”! The DTC staff are always incredibly helpful, and the visits are a great way to become familiar with many useful new research tools. Universities can become a bit like ghost towns in the summer, so he also enjoys the chance to get away to Boulder, with its more comfortable climate, great opportunities to be outdoors, numerous healthy places to eat, and opportunities to interact with the many scientists at NCAR.

Dr. Gallus is a meteorology professor at Iowa State University whose research has often focused on improved understanding and forecasting of convective systems. The CLUE output was provided by Dr. Adam Clark from NSSL, while observed rainfall, reflectivity, and storm rotation data were gathered by Jamie Wolff at the DTC, who is serving as his host, and Dr. Patrick Skinner from NSSL who is also working with CLUE output as a DTC visitor this year. Dr. Gallus is also working closely with John Halley-Gotway at the DTC who has provided extensive assistance with model verification via the MET and METViewer tools.

Community Connections

WRF Users' Workshop - June 2017

The first Weather Research and Forecasting model (WRF) Users’ Workshop was held in 2000. Since then, eighteen annual workshops have been organized and hosted by the National Center for Atmospheric Research in Boulder, Colorado to provide a platform where developers and users can share new developments, test results, and feedback. This exchange ensures the WRF model continues to progress and remain relevant.

The workshop program has evolved through the years. In 2006, instructional sessions were introduced, with the first focused on the newly developed WRF Pre-processing System (WPS). The number of users has grown since the WRF Version 3 release in 2008, so a lecture series on the fundamentals of physics was introduced in 2010 to train users to better understand and apply the model. Since that time, the series has covered microphysics, planetary boundary layer (PBL) and land surface physics, convection and atmospheric radiation. The series then expanded to address dynamics, modeling system best practices, and computing.

The 18th WRF Users’ Workshop was held June 12 – 16, 2017. The workshop was attended by 180 users from 20 countries, including 57 first time attendees, and 130 papers were presented. The first afternoon of the workshop, four lectures covered the basics of ensemble forecasting, model error, verification and virtualization of ensemble forecast products. The following days included nine sessions on a wide range of WRF model development and applications. On Friday, five mini-tutorials were offered on WRF-Hydro, Model for Prediction Across Scales (MPAS) for WRF Users, Visualization and Analysis Platform for Ocean, Atmosphere, and Solar Researchers (VAPOR), NCAR Common Language (NCL) and WRF-Python. All workshop presentations are available from http://www.mmm.ucar.edu/wrf/users/workshops/WS2017/WorkshopPapers.php.

The WRF Modeling System Development session included the annual update, plus status reports on WRF Data Assimilation (WRFDA), WRF-Chem, WRF software, Gridpoint Statistical Interpolation (GSI), Hurricane WRF (HWRF) and WRF-Hydro. A hybrid vertical coordinate was introduced in Version 3.9 for the Advanced Research WRF (ARW) that may potentially improve prediction in the upper-air jet streak region. Another notable addition to the model is the predicted particle properties or P3 scheme, a new type of microphysics.

Both data assimilation and model physics were improved in the operational application of WRF in the Rapid Refresh (RAP) and High-Resolution Rapid Refresh (HRRR) models. Advances were made in the Grell-Freitas cumulus scheme, Mellor-Yamada-Nakanishi-Niino (MYNN) PBL scheme and the Rapid Update Cycle (RUC) Land Surface Model (LSM). The HWRF operational upgrade included a scale-aware Simplified Arakawa Schubert (SAS) cumulus scheme, new Ferrier-Aligo microphysics schemes, and improved data assimilation. Other development and applications of WRF were also presented. Notably, the large-eddy simulation (LES) capability has been extended to many real-data applications in recent years.

There were two discussions during the workshop devoted to physics suites and model unification. Two suites of pre-selected physics combinations are now available in V3.9 that are verified to work well together for weather prediction applications. The second discussion was about model unification between WRF and the newer MPAS. While the two models remain independent, both are supported by the community and aspects of their development effort can be shared.

The next WRF Users’ Workshop will in June 2018.

Did you know?

A single column model (SCM) can be an easy, quick, and cheap way to test new or updated physics schemes

A SCM replaces advection from a dynamical core with forcing that approximates how the atmospheric column state changes due to large-scale horizontal winds. An atmospheric physics suite then calculates impacts to radiation, convection, microphysics, vertical diffusion and other physical processes as the forcing alters the column state.

The SCM approach is conceptually simple, extremely quick to run (less than a minute on a laptop), and makes interpretation of results less ambiguous because it eliminates three-dimensional dynamical core feedbacks. It can also be relatively straightforward to compare how different physics respond to identical forcing and to perhaps provide evidence or justification for more expensive three-dimensional modeling tests.

The DTC’s Global Model Test Bed (GMTB) project built an SCM on top of the operational Global Forecast System (GFS) physics suite and used it as part of a physics test harness. It can be considered the simplest tier within a hierarchy of physics testing methods. Recently, it has been used to compare how the operational GFS suite performs compared to one with an advanced convective parameterization for simulations of maritime and continental deep convection.

The SCM code is available to collaborators on NOAA's VLab, and will be updated periodically to keep pace with changes in the operational FV3-GFS model. Additionally, as the Common Community Physics Package comes online in the near future, the SCM will be compatible with all physics within that framework.

Announcement

2018 Hurricane WRF Tutorial

The DTC is pleased to announce that registration is now open for the 2018 Hurricane WRF (HWRF) tutorial to be held 23–25 January 2018 at the NOAA Center for Weather and Climate Prediction (NCWCP) in College Park, MD. Registration, a draft agenda, and information about hotel accommodations and other logistics can be found on our tutorial website:

The HWRF tutorial will be a three-day event organized by the Developmental Testbed Center (DTC) and by the NOAA Environmental Modeling Center (EMC). The Hurricane Weather Research and Forecast system (HWRF) is a coupled atmosphere-ocean model suitable for tropical cyclone (TC) research and forecasting in all Northern and Southern Hemisphere ocean basins.

Tutorial participants can expect to hear lectures on all aspects of HWRF, including model physics and dynamics, nesting, initialization, coupling with the ocean, postprocessing, and vortex tracking. Additionally, enrichment lectures on HWRF's multistorm capability, TC verification, HWRF ensemble system, and NCEP's future plans for TC numerical weather prediction will be presented. Practical sessions will give tutorial participants hands-on experience in running HWRF.

We look forward to seeing you in College Park. If you have any questions, please do not hesitate to contact:

Evan Kalina (NOAA DTC): Evan.Kalina@noaa.gov

Kathryn Newman (NCAR DTC): knewman@ucar.edu

Zhan Zhang (NOAA EMC): Zhan.Zhang@noaa.gov

Bin Liu (NOAA EMC): Bin.Liu@noaa.gov>

HWRF Tutorial organizing committee

Software Release

Release of GSI Version 3.6 and EnKF Version 1.2

The Developmental Testbed Center (DTC) is pleased to announce the release of the following community data assimilation systems:

Version 3.6 of the Community Gridpoint Statistical Interpolation (GSI) data assimilation system

Version 1.2 of the Community Ensemble Kalman Filter (EnKF) data assimilation system

The released packages for GSI and EnKF and the documentation for each can be accessed through each system website as follows:

Please send questions and inquiries to the help desk emails: gsi-help@ucar.edu and enkf-help@ucar.edu.

GSI and EnKF are community data assimilation systems, open to contributions from both the operational and research communities. Please contact the helpdesk for assistance on gaining access to the development code repository and learning about code commit procedures.

Prospective contributors can also apply to the DTC visitor program for their data assimilation research and code transition. The visitor program is open to applications year-round. Please check the visitor program webpage (https://www.dtcenter.org/visitors/) for the latest announcement of opportunity and application procedures.

The DTC is a distributed facility, with a goal of bridging the research and operational communities. This code release is sponsored by the National Oceanic and Atmospheric Administration (NOAA) and the National Center for Atmospheric Research (NCAR). NCAR is supported by the National Science Foundation (NSF).

PROUD Awards

George McCabe is a Software Engineer III for the NSF NCAR Research Applications Laboratory whose skill and commitment have significantly improved the reliability, security, and operational readiness of DTC-supported verification systems. As the lead developer of the METplus Python Wrappers, George plays a central role in delivering a consistent, scalable, and reproducible verification system that is trusted by both research and operational communities.

George’s contributions extend beyond routine software development. He led efforts to resolve complex integration, automation, and cybersecurity challenges keeping DTC-supported verification systems compliant, operationally viable, and on track for delivery within evolving security environments. His work has strengthened METplus as a robust, secure, and mission-ready framework while preserving usability for a broad and expanding user base.

In addition to METplus, George contributes to Air Force Verification and Validation on the Global Synthetic Weather Radar project and the Benchmarking Testing and Evaluation and Verification Framework, advancing scientifically rigorous, reproducible evaluation practices while reducing redundant effort across institutions. He is widely respected for his exceptional ability to translate complex technical and security challenges into practical, sustainable solutions, as well as for his calm, methodical approach to problem solving, particularly during high pressure and high-impact efforts.

George’s initiative, technical leadership, and commitment to excellence consistently go beyond expectations, establishing him as a cornerstone of DTC software development and a profoundly deserving recipient of this recognition.

Copyright © 2026. All rights reserved.