Hurricane Sandy Case (27 Oct 2012)

Hurricane Sandy Case (27 Oct 2012)Case overview

Reason for interest: Destructive hurricane

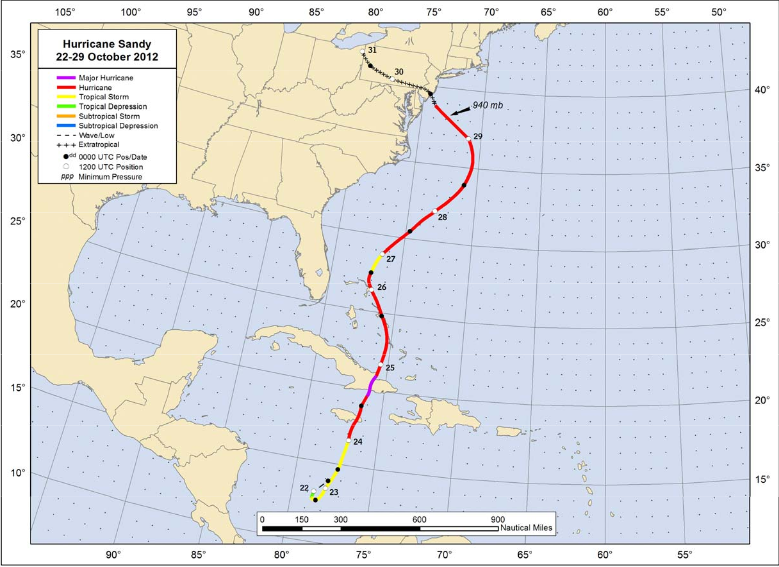

The most deadly and destructive hurricane during the 2012 Atlantic hurricane season, Hurricane Sandy was a late-season storm that developed from a tropical wave in the western Caribbean. It quickly intensified into a tropical storm and made its first landfall as a Category 1 storm over Jamaica. As the storm progressed northward, it continued to strengthen into a Category 3 storm, prior to making landfall on eastern Cuba and subsequently weakening back to a Category 1 hurricane. Sandy continued weakening to tropical-storm strength as it moved up through the Bahamas, and then began re-intensifying as it pushed northeastward parallel to the United States coastline. Ultimately, Sandy curved west-northwest, maintaining its strength as it transitioned to an extratropical cyclone just prior to coming onshore in New Jersey with hurricane force winds.

In total, more than 230 fatalities were directly or indirectly associated with Hurricane Sandy. Sandy impacted the the Caribbean, Bahamas, Bermuda, the southeastern US, Mid-Atlantic and New England states, up through eastern Canada. Sandy was blamed for $65 billion in damage in the U.S. alone, making it the fourth-costliest hurricane in U.S. history as of August 2019.

NHC Best track positions for Hurricane Sandy, 22-29 October 2012:

Observed precipitation for 192 hours (12 UTC 24 Oct - 12 UTC 1 Nov 2012) courtesy of the NWS/WPC:

Set up environment

Set up environmentSet Up Environment

To run the Hurricane Sandy case, first establish environment variables for this case study

If you have not already done so, navigate to the top-level experiment directory (where you have downloaded the container-dtc-nwp directory) and set the environment variables PROJ_DIR and PROJ_VERSION.

| tcsh | bash |

|---|---|

|

cd /home/ec2-user

setenv PROJ_DIR `pwd` setenv PROJ_VERSION 4.1.0

|

cd /home/ec2-user

export PROJ_DIR=`pwd` export PROJ_VERSION="4.1.0"

|

Then, you should set up the variables and directories for the Sandy experiment

| tcsh | bash |

|---|---|

|

setenv CASE_DIR ${PROJ_DIR}/sandy

|

export CASE_DIR=${PROJ_DIR}/sandy

|

cd ${CASE_DIR}

mkdir -p wpsprd wrfprd gsiprd postprd pythonprd metprd metviewer/mysql

Extra step for singularity users

Users of singularity containerization software will need to set a special variable for temporary files written by singularity at runtime:

| tcsh | bash |

|---|---|

|

setenv TMPDIR ${PROJ_DIR}/sandy/tmp

|

export TMPDIR=${PROJ_DIR}/sandy/tmp

|

Run NWP initialization components

Run NWP initialization componentsRun NWP Initialization Components

The NWP workflow process begins by creating the initial and boundary conditions for running the WRF model. This will be done in two steps using WPS (geogrid.exe, ungrib.exe, metgrid.exe) and WRF (real.exe) programs.

Initialization Data

Global Forecast System (GFS) forecast files initialized at 18 UTC on 20121027 out 48 hours in 3-hr increments are provided for this case.

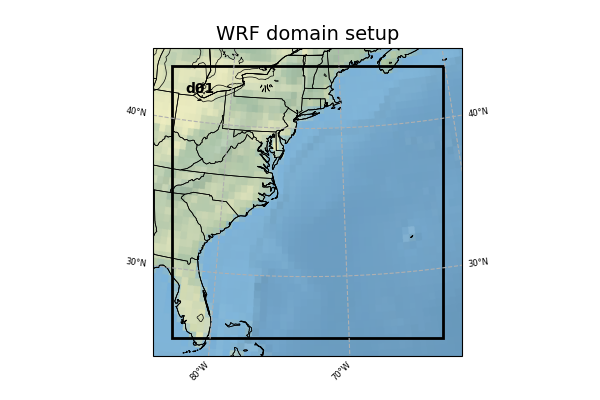

Model Domain

The WRF domain we have selected covers most of the east coast of the United States, and a portion of the northwestern Atlantic Ocean. The exact domain is shown below:

Select the appropriate container instructions for your system below:

Step One (Optional): Run Python to Create Image of Domain

A Python script has been provided to plot the computational domain that is being run for this case. If desired, run the dtcenter/python container to execute Python in docker-space using the namelist.wps in the local scripts directory, mapping the output into the local pythonprd directory.

-v ${PROJ_DIR}/container-dtc-nwp/components/scripts/common:/home/scripts/common \

-v ${PROJ_DIR}/container-dtc-nwp/components/scripts/sandy_20121027:/home/scripts/case \

-v ${PROJ_DIR}/data/shapefiles:/home/data/shapefiles \

-v ${CASE_DIR}/pythonprd:/home/pythonprd \

--name run-sandy-python dtcenter/python:${PROJ_VERSION} \

/home/scripts/common/run_python_domain.ksh

A successful completion of the Python plotting script will result in the following file in the pythonprd directory. This is the same image that is shown at the top of the page showing the model domain.

Step Two: Run WPS

Using the previously downloaded data (in ${PROJ_DIR}/data), while pointing to the namelists in the local scripts directory, run the dtcenter/wps_wrf container to run WPS in docker-space and map the output into the local wpsprd directory.

-v ${PROJ_DIR}/data/WPS_GEOG:/data/WPS_GEOG \

-v ${PROJ_DIR}/data:/data -v ${PROJ_DIR}/container-dtc-nwp/components/scripts/common:/home/scripts/common \

-v ${PROJ_DIR}/container-dtc-nwp/components/scripts/sandy_20121027:/home/scripts/case \

-v ${CASE_DIR}/wpsprd:/home/wpsprd --name run-sandy-wps dtcenter/wps_wrf:${PROJ_VERSION} \

/home/scripts/common/run_wps.ksh

Once WPS begins running, you can watch the log files being generated in another window by setting the ${CASE_DIR} environment variable and tailing the log files:

Type CTRL-C to exit the tail utility.

A successful completion of the WPS steps will result in the following files (in addition to other files) in the wpsprd directory

FILE:2012-10-27_18

FILE:2012-10-27_21

FILE:2012-10-28_00

met_em.d01.2012-10-27_18:00:00.nc

met_em.d01.2012-10-27_21:00:00.nc

met_em.d01.2012-10-28_00:00:00.nc

Step Three: Run real.exe

Using the previously downloaded data (in ${PROJ_DIR}/data), output from WPS in step one, and pointing to the namelists in the local scripts directory, run the dtcenter/wps_wrf container to this time run real.exe in docker-space and map the output into the local wrfprd directory.

-v ${PROJ_DIR}/data:/data -v ${PROJ_DIR}/container-dtc-nwp/components/scripts/common:/home/scripts/common \

-v ${PROJ_DIR}/container-dtc-nwp/components/scripts/sandy_20121027:/home/scripts/case \

-v ${CASE_DIR}/wpsprd:/home/wpsprd \

-v ${CASE_DIR}/wrfprd:/home/wrfprd --name run-sandy-real dtcenter/wps_wrf:${PROJ_VERSION} \

/home/scripts/common/run_real.ksh

The real.exe program should take less than a minute to run, but you can follow its progress as well in the wrfprd directory:

Type CTRL-C to exit the tail utility.

A successful completion of the REAL step will result in the following files (in addition to other files) in the wrfprd directory

wrfinput_d01

Step One (Optional): Run Python to Create Image of Domain

A Python script has been provided to plot the computational domain that is being run for this case. If desired, run the dtcenter/python container to execute Python in singularity-space using the namelist.wps in the local scripts directory, mapping the output into the local pythonprd directory.

A successful completion of the Python plotting script will result in the following file in the pythonprd directory. This is the same image that is shown at the top of the page showing the model domain.

Step Two: Run WPS

Using the previously downloaded data (in ${PROJ_DIR}/data), while pointing to the namelists in the local scripts directory, run the wps_wrf container to run WPS in singularity-space and map the output into the local wpsprd directory.

Once WPS begins running, you can watch the log files being generated in another window by setting the ${CASE_DIR} environment variable and tailing the log files:

Type CTRL-C to exit the tail utility.

A successful completion of the WPS steps will result in the following files (in addition to other files) in the wpsprd directory

FILE:2012-10-27_18

FILE:2012-10-27_21

FILE:2012-10-28_00

met_em.d01.2012-10-27_18:00:00.nc

met_em.d01.2012-10-27_21:00:00.nc

met_em.d01.2012-10-28_00:00:00.nc

Step Three: Run real.exe

Using the previously downloaded data (in ${PROJ_DIR}/data), output from WPS in step one, and pointing to the namelists in the local scripts directory, run the wps_wrf container to this time run real.exe in singularity-space and map the output into the local wrfprd directory.

The real.exe program should take less than a minute to run, but you can follow its progress as well in the wrfprd directory:

Type CTRL-C to exit the tail utility.

A successful completion of the REAL step will result in the following files (in addition to other files) in the wrfprd directory

wrfinput_d01

Run data assimilation

Run data assimilationRun Data Assimilation

Our next step in the NWP workflow will be to run GSI data assimilation to achieve better initial conditions in the WRF model run. GSI (gsi.exe) updates the wrfinput file created by real.exe.

Select the appropriate container instructions for your system below:

Using the previously downloaded data (in ${PROJ_DIR}/data), while pointing to the namelist in the local scripts directory, run the dtcenter/gsi container to run GSI in docker-space and map the output into the local gsiprd directory:

As GSI is run the output files will appear in the local gsiprd/. Please review the contents of that directory to interrogate the data.

Once GSI begins running, you can watch the log file being generated in another window by setting the ${CASE_DIR} environment variable and tailing the log file:

Type CTRL-C to exit the tail.

A successful completion of the GSI step will result in the following files (in addition to other files) in the gsiprd directory

berror_stats

diag_*

fit_*

fort*

gsiparm.anl

*info

list_run_directory

prepburf

satbias*

stdout*

wrf_inout

wrfanl.2012102718

Using the previously downloaded data (in ${PROJ_DIR}/data), while pointing to the namelist in the local scripts directory, run the gsi container to run GSI in singularity-space and map the output into the local gsiprd directory:

As GSI is run the output files will appear in the local gsiprd/. Please review the contents of that directory to interrogate the data.

Once GSI begins running, you can watch the log file being generated in another window by setting the ${CASE_DIR} environment variable and tailing the log file:

Type CTRL-C to exit the tail.

A successful completion of the GSI step will result in the following files (in addition to other files) in the gsiprd directory

berror_stats

diag_*

fit_*

fort*

gsiparm.anl

*info

list_run_directory

prepburf

satbias*

stdout*

wrf_inout

wrfanl.2012102718

Run NWP model

Run NWP modelRun NWP Model

To integrate the WRF forecast model through time, we use the wrf.exe program and point to the initial and boundary condition files created in the previous initialization, and optional data assimilation, step(s).

Select the appropriate container instructions for your system below:

Using the previously downloaded data (in ${PROJ_DIR}/data), while pointing to the namelists in the local scripts directory, run the dtcenter/wps_wrf container to run WRF in docker-space and map the output into the local wrfprd directory.

Option One: Default number (4) of processors

By default WRF will run with 4 processors using the following command:

-v ${PROJ_DIR}/container-dtc-nwp/components/scripts/common:/home/scripts/common \

-v ${PROJ_DIR}/container-dtc-nwp/components/scripts/sandy_20121027:/home/scripts/case \

-v ${CASE_DIR}/wpsprd:/home/wpsprd -v ${CASE_DIR}/gsiprd:/home/gsiprd -v ${CASE_DIR}/wrfprd:/home/wrfprd \

--name run-sandy-wrf dtcenter/wps_wrf:${PROJ_VERSION} /home/scripts/common/run_wrf.ksh

Option Two: User-specified number of processors

If you run into trouble on your machine when using 4 processors, you may want to run with fewer (or more!) processors by passing the "-np #" option to the script. For example the following command runs with 2 processors:

-v ${PROJ_DIR}/container-dtc-nwp/components/scripts/common:/home/scripts/common \

-v ${PROJ_DIR}/container-dtc-nwp/components/scripts/sandy_20121027:/home/scripts/case \

-v ${CASE_DIR}/wpsprd:/home/wpsprd -v ${CASE_DIR}/gsiprd:/home/gsiprd -v ${CASE_DIR}/wrfprd:/home/wrfprd \

--name run-sandy-wrf dtcenter/wps_wrf:${PROJ_VERSION} /home/scripts/common/run_wrf.ksh -np 2

As WRF is run the NetCDF output files will appear in the local wrfprd/. Please review the contents of that directory to interrogate the data.

Once WRF begins running, you can watch the log file being generated in another window by setting the ${CASE_DIR} environment variable and tailing the log file:

Type CTRL-C to exit the tail.

A successful completion of the WRF step will result in the following files (in addition to other files) in the wrfprd directory:

wrfout_d01_2012-10-27_19_00_00.nc

wrfout_d01_2012-10-27_20_00_00.nc

wrfout_d01_2012-10-27_21_00_00.nc

wrfout_d01_2012-10-27_22_00_00.nc

wrfout_d01_2012-10-27_23_00_00.nc

wrfout_d01_2012-10-28_00_00_00.nc

Using the previously downloaded data in ${PROJ_DIR}/data while pointing to the namelists in the local scripts directory, run the wps_wrf container to run WRF in singularity-space and map the output into the local wrfprd directory.

Option One: Default number (4) of processors

By default WRF will run with 4 processors using the following command:

Option Two: User-specified number of processors

If you run into trouble on your machine when using 4 processors, you may want to run with fewer (or more!) processors by passing the "-np #" option to the script. For example the following command runs with 2 processors:

As WRF is run the NetCDF output files will appear in the local wrfprd/. Please review the contents of that directory to interrogate the data.

Once WRF begins running, you can watch the log file being generated in another window by setting the ${CASE_DIR} environment variable and tailing the log file:

Type CTRL-C to exit the tail.

A successful completion of the WRF step will result in the following files (in addition to other files) in the wrfprd directory:

wrfout_d01_2012-10-27_19_00_00.nc

wrfout_d01_2012-10-27_20_00_00.nc

wrfout_d01_2012-10-27_21_00_00.nc

wrfout_d01_2012-10-27_22_00_00.nc

wrfout_d01_2012-10-27_23_00_00.nc

wrfout_d01_2012-10-28_00_00_00.nc

Postprocess NWP data

Postprocess NWP dataPostprocess NWP Data

After the WRF model is run, the output is run through the Unified Post Processor (UPP) to interpolate model output to new vertical coordinates, e.g. pressure levels, and compute a number diagnostic variables that are output in GRIB2 format.

Select the appropriate container instructions for your system below:

Using the previously created WRF netCDF data in the wrfprd directory, while pointing to the namelist in the local scripts directory, run the dtcenter/upp container to run UPP in docker-space to post-process the WRF data into grib2 format, and map the output into the local postprd directory:

-v ${PROJ_DIR}/container-dtc-nwp/components/scripts/common:/home/scripts/common \

-v ${PROJ_DIR}/container-dtc-nwp/components/scripts/sandy_20121027:/home/scripts/case \

-v ${CASE_DIR}/wrfprd:/home/wrfprd -v ${CASE_DIR}/postprd:/home/postprd \

--name run-sandy-upp dtcenter/upp:${PROJ_VERSION} /home/scripts/common/run_upp.ksh

As UPP is run the post-processed GRIB output files will appear in the postprd/. Please review the contents of those directories to interrogate the data.

UPP runs quickly for each forecast hour, but you can see the log files generated in another window by setting the ${CASE_DIR} environment variable and tailing the log file:

Type CTRL-C to exit the tail.

A successful completion of the UPP step will result in the following files (in addition to other files) in the postprd directory:

wrfprs_d01.01

wrfprs_d01.02

wrfprs_d01.03

wrfprs_d01.04

wrfprs_d01.05

wrfprs_d01.06

Using the previously created WRF netCDF data in the wrfprd directory, while pointing to the namelists in the local scripts directory, create a singularity container using the upp_3.5 image to run UPP in singularity-space to post-process the WRF data into grib2 format, and map the output into the local postprd directory:

As UPP is run the post-processed GRIB output files will appear in the postprd/. Please review the contents of those directories to interrogate the data.

UPP runs quickly for each forecast hour, but you can see the log files generated in another window by setting the ${CASE_DIR} environment variable and tailing the log file:

Type CTRL-C to exit the tail.

A successful completion of the UPP step will result in the following files (in addition to other files) in the postprd directory:

wrfprs_d01.01

wrfprs_d01.02

wrfprs_d01.03

wrfprs_d01.04

wrfprs_d01.05

wrfprs_d01.06

Create graphics

Create graphicsCreate Graphics

After the model output is post-processed with UPP, the forecast fields can be visualized using Python. The plotting capabilities include generating graphics for near-surface and upper-air variables as well as accumulated precipitation, reflectivity, helicity, and CAPE.

Select the appropriate container instructions for your system below:

Pointing to the scripts in the local scripts directory, run the dtcenter/python container to create graphics in docker-space and map the images into the local pythonprd directory:

-v ${PROJ_DIR}/container-dtc-nwp/components/scripts/common:/home/scripts/common \

-v ${PROJ_DIR}/container-dtc-nwp/components/scripts/sandy_20121027:/home/scripts/case \

-v ${PROJ_DIR}/data/shapefiles:/home/data/shapefiles \

-v ${CASE_DIR}/postprd:/home/postprd -v ${CASE_DIR}/pythonprd:/home/pythonprd \

--name run-sandy-python dtcenter/python:${PROJ_VERSION} /home/scripts/common/run_python.ksh

After Python has been run, the plain image output files will appear in the local pythonprd/ directory.

250wind_d01_f*.png

2mdew_d01_f*.png

2mt_d01_f*.png

500_d01_f*.png

maxuh25_d01_f*.png

qpf_d01_f*.png

refc_d01_f*.png

sfcape_d01_f*.png

slp_d01_f*.png

The images may be visualized using your favorite display tool.

Pointing to the scripts in the local scripts directory, create a container using the python singularity image to create graphics in singularity-space and map the images into the local pythonprd directory:

After Python has been run, the plain image output files will appear in the local pythonprd/ directory.

250wind_d01_f*.png

2mdew_d01_f*.png

2mt_d01_f*.png

500_d01_f*.png

maxuh25_d01_f*.png

qpf_d01_f*.png

refc_d01_f*.png

sfcape_d01_f*.png

slp_d01_f*.png

The images may be visualized using your favorite display tool.

Run verification software

Run verification softwareRun Verification Software

After the model output is post-processed with UPP, it is run through the Model Evaluation Tools (MET) software to quantify its performance relative to observations. State variables, including temperature, dewpoint, and wind, are verified against both surface and upper-air point observations, while precipitation is verified against a gridded analysis.

Select the appropriate container instructions for your system below:

Using the previously downloaded data (in ${PROJ_DIR}/data), while pointing to the output in the local scripts and postprd directories, run the dtcenter/nwp-container-met container to run the verification software in docker-space and map the statistical output into the local metprd directory:

-v ${PROJ_DIR}/data:/data \

-v ${PROJ_DIR}/container-dtc-nwp/components/scripts/common:/home/scripts/common \

-v ${PROJ_DIR}/container-dtc-nwp/components/scripts/sandy_20121027:/home/scripts/case \

-v ${CASE_DIR}/postprd:/home/postprd -v ${CASE_DIR}/metprd:/home/metprd \

--name run-sandy-met dtcenter/nwp-container-met:${PROJ_VERSION} /home/scripts/common/run_met.ksh

MET will write a variety of ASCII and netCDF output files to the local metprd/. Please review the contents of the directories: grid_stat, pb2nc, pcp_combine, and point_stat, to interrogate the data.

grid_stat/grid_stat*.stat

pb2nc/prepbufr*.nc

pcp_combine/ST2*.nc

pcp_combine/wrfprs*.nc

point_stat/point_stat*.stat

Using the previously downloaded data (in ${PROJ_DIR}/data), while pointing to the output in the local scripts and postprd directories, create a container using the nwp-container-met image to run the verification software in singularity-space and map the statistical output into the local metprd directory:

MET will write a variety of ASCII and netCDF output files to the local metprd/. Please review the contents of the directories: grid_stat, pb2nc, pcp_combine, and point_stat, to interrogate the data.

grid_stat/grid_stat*.stat

pb2nc/prepbufr*.nc

pcp_combine/ST2*.nc

pcp_combine/wrfprs*.nc

point_stat/point_stat*.stat

Visualize verification results

Visualize verification resultsVisualize Verification Results

The METviewer software provides a database and display system for visualizing the statistical output generated by MET. After starting the METviewer service, a new database is created into which the MET output is loaded. Plots of the verification statistics are created by interacting with a web-based graphical interface.

Select the appropriate container instructions for your system below:

In order to visualize the MET output using the METviewer database and display system you first need to launch the METviewer container.

docker-compose up -d

Note: you may need to wait 1-2 minutes prior to running the next command, as some processes starting up in the background may be slow.

The MET statistical output then needs to be loaded into the MySQL database for querying and plotting by METviewer

The METviewer GUI can then be accessed with the following URL copied and pasted into your web browser:

Note, if you are running on AWS, run the following commands to reconfigure METviewer with your current IP address and restart the web service:

|

docker exec -it metviewer /bin/bash

/scripts/common/reset_metv_url.ksh exit |

The METviewer GUI can then be accessed with the following URL copied and pasted into your web browser (where IPV4_public_IP is your IPV4Public IP from the AWS “Active Instances” web page):

http://IPV4_public_IP:8080/metviewer/metviewer1.jsp

The METviewer GUI can be run interactively to create verification plots on the fly. However, to get you going, two sample plots are provided. Do the following in the METviewer GUI:

-

Click the "Choose File" button and navigate on your file system to:

${PROJ_DIR}/container-dtc-nwp/components/scripts/sandy_20121027/metviewer/plot_APCP_03_ETS.xml

-

Click "OK" to load the XML to the GUI and populate all the required options.

-

Click the "Generate Plot" button on the top of the page to create the image.

Next, follow the same steps to create a plot of 10-meter wind components with this XML file:

Feel free to make changes in the METviewer GUI and use the "Generate Plot" button to make new plots.

In order to visualize the MET output using the METviewer database and display system, you first need to build Singularity sandbox from the docker container using 'fix-perms' options. The execution of this step creates a metv4singularity directory.

singularity build --sandbox --fix-perms --force metv4singularity docker://dtcenter/nwp-container-metviewer-for-singularity:${PROJ_VERSION}

Next, start the Singularity instance as 'writable' and call it 'metv':

Then, initialize and start MariaDB and Tomcat:

Then, navigate to the scripts area and run a shell in the Singularity container:

singularity shell instance://metv

Now it is time to load the MET output into a METviewer database. As a note, the metv_load_singularity.ksh script requires two command-line arguments: 1) name of the METviewer database (e.g., mv_sandy), and 2) the ${CASE_DIR}

The METviewer GUI can then be accessed with the following URL copied and pasted into your web browser (where IPV4_public_IP is your IPV4Public IP from the AWS “Active Instances” web page):

http://IPV4_public_IP:8080/metviewer/metviewer1.jsp

The METviewer GUI can be run interactively to create verification plots on the fly. However, to get you going, two sample plots are provided. Do the following in the METviewer GUI:

-

Click the "Choose File" button and navigate on your file system to:

${PROJ_DIR}/container-dtc-nwp/components/scripts/sandy_20121027/metviewer/plot_APCP_03_ETS.xml

-

Click "OK" to load the XML to the GUI and populate all the required options.

-

Click the "Generate Plot" button on the top of the page to create the image.

Next, follow the same steps to create a plot of 10-meter wind components with this XML file:

Feel free to make changes in the METviewer GUI and use the "Generate Plot" button to make new plots.

You can also create plots via the METviewer batch plotting capability (i.e., not the METviewer GUI). A script to run the two supplied METviewer XMLs provides an example on how to create plots. Note you must be in your metviewer singularity shell to run it, as shown below:

cd ${PROJ_DIR}/container-dtc-nwp/components/scripts/sandy_20121027/metviewer ./metv_plot_singularity.ksh ${CASE_DIR}

The output goes to: ${CASE_DIR}/metviewer/plots, and you can use display to view the images.